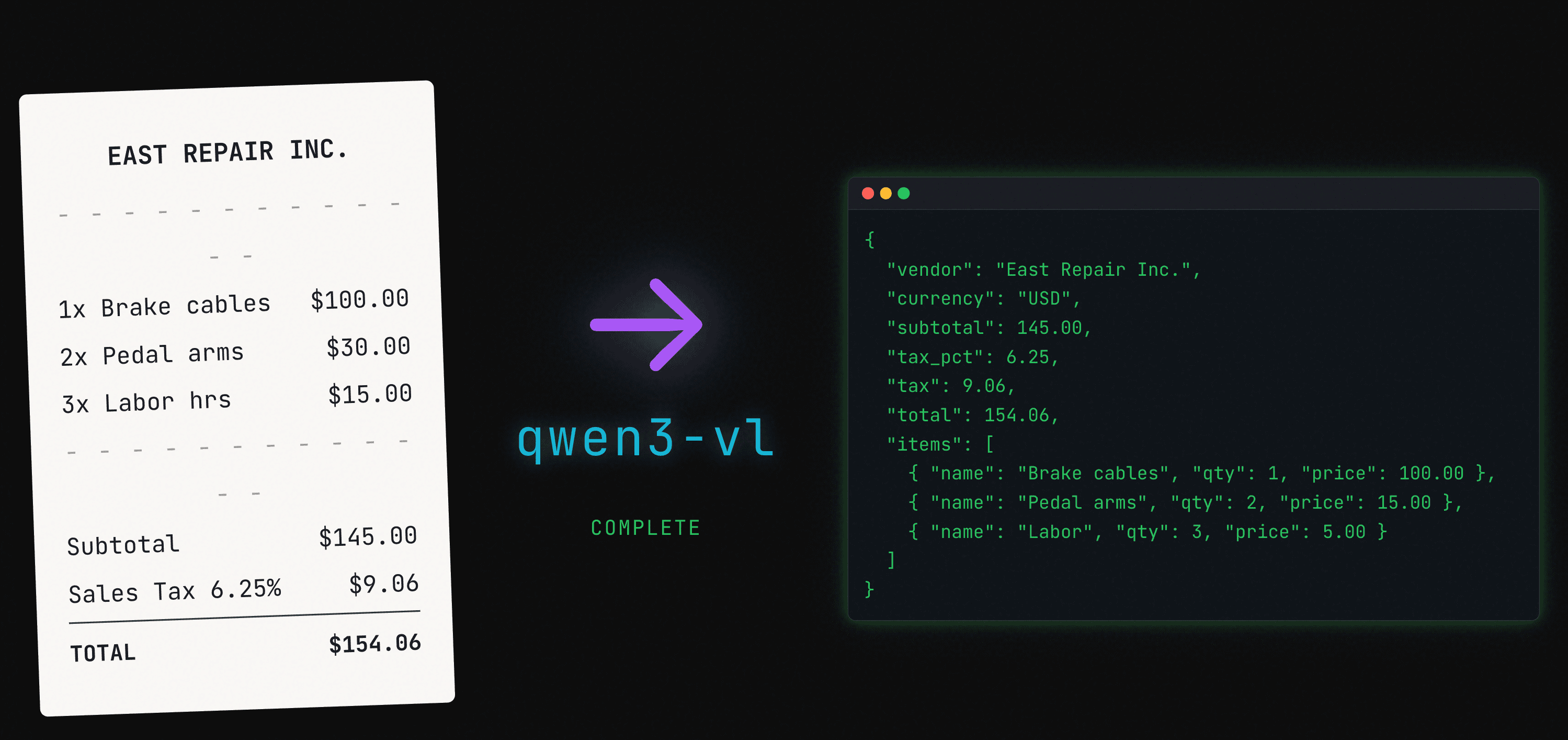

Setting Up Your Self-Hosted AI Stack - Part 3: From Receipts to Structured Data with Vision Language Models and n8n

Automate expense reporting with smart document processing. Transform receipts and invoices into structured data using VLMs and workflow automation.

I'm Farzam, a Software Engineer specializing in backend development. My mission: Collaborate, share proven tricks, and help you avoid the pricey surprises I've encountered along the way.

It's Been a While

Sorry for being away for so long. I've been head-down strengthening my software engineering foundation—consuming rather than producing. After a while, I started feeling this pull to balance things out by building again.

I just finished Computer Systems: A Programmer's Perspective (CS:APP), and I can't recommend it enough. If you've ever felt gaps in your foundational knowledge, this book fills them. It's one of the best technical books I've read. Now I'm working through AI Engineering by Chip Huyen, and it felt like the right time to pick up where we left off.

Why Read This

My background is software engineering, not machine learning. Over the past year I've shifted into what Chip calls "AI engineering": building applications on top of foundation models. I want to share what I've learned with those who haven't yet gotten the chance to experience building in this space.

Life is demanding and the field keeps moving. Not everyone has time to keep up—reading papers, watching tutorials, building side projects. That's exactly why I'm building this series: real projects, real source code, walked through step by step. A way to stay current with what's actually possible.

What We're Building

A 10GB model. A 67-line prompt. 7 commands to set up. 2 config values.

That's what turns a crumpled receipt photo into structured JSON—line items, taxes, discounts, multiple currencies. All running locally on your laptop.

From this:

To this:

{

"currency": "USD",

"receipt_type": "service",

"tax_format": "added",

"receipt_subtotal": 145.00,

"receipt_total_tax_amount": 9.06,

"receipt_total_tax_percentage": 6.25,

"receipt_total": 154.06,

"items": [

{

"item_name": "Front and rear brake cables",

"item_quantity": 1,

"item_unit_price": 100.00,

"item_total_price": 100.00,

"confidence_score": 0.95

},

{

"item_name": "New set of pedal arms",

"item_quantity": 2,

"item_unit_price": 15.00,

"item_total_price": 30.00,

"confidence_score": 0.95

},

{

"item_name": "Labor 3hrs",

"item_quantity": 3,

"item_unit_price": 5.00,

"item_total_price": 15.00,

"confidence_score": 0.95

}

]

}

Note: The model correctly handles quantity math (2 × $15 = $30), extracts tax separately (6.25% = $9.06), and assigns confidence scores to each extraction.

Let me show you how.

Did You Know: How VLMs "See" Images

Vision Language Models don't process images like humans. They divide images into patches (typically 14×14 or 16×16 pixels), encode them through a vision transformer, then project into the language model's embedding space. Each patch becomes a "token" the model reasons about—similar to how words become tokens in text. A single receipt image might become hundreds or even thousands of visual tokens depending on resolution.

Before We Start

You'll need:

| Tool | Purpose | Install |

| Docker & Docker Compose | Container orchestration | docs.docker.com/get-docker |

| Ollama | Local LLM runtime | ollama.com/download |

| Python 3.8+ | Setup script | Usually pre-installed on macOS/Linux |

Hardware: 16GB RAM minimum, 24GB+ recommended for smooth VLM inference.

No GPU or limited RAM? You can use Ollama's cloud models instead of running locally. See the Cloud Alternative section.

Model: We'll use qwen3-vl:8b-thinking-q8_0 (~10GB). Pull it now:

ollama pull qwen3-vl:8b-thinking-q8_0

Everything else runs in Docker.

Why Vision Language Models

Traditional OCR reads text without understanding context. Vision Language Models understand what they're looking at.

When processing a restaurant receipt:

OCR sees: "Total: $47.83"

VLM understands: This is the final amount, separate from subtotal and tax

When processing an invoice:

OCR sees: "Net 30"

VLM understands: This is payment terms, not an amount

VLMs read documents like humans do—understanding layout, context, and meaning.

The Three Pillars

1. Vision Language Model (qwen3-vl)

The "eyes" that read and understand receipts and invoices. It processes images, understands document layouts (headers, line items, totals), and outputs structured JSON data.

2. n8n Workflow Engine

The "brain" that orchestrates the entire process. It handles file uploads and validation, manages job queuing and processing, and coordinates data extraction and export through visual workflows.

Also our backend. n8n's webhooks serve as our API layer—no separate backend needed. It handles job creation, result fetching, and CSV exports directly.

3. Web Upload Interface

The "front door" for easy document submission. Drag-and-drop interface with progress tracking and status updates, plus the ability to download processed results.

Did You Know: Why Visual Workflow Tools Matter

n8n workflows are JSON under the hood. Each node has inputs, outputs, and configuration. The visual builder prevents syntax errors and makes debugging visible—you can see exactly where data flows and where it breaks.

Pro tip I learned the hard way: Build workflows in the UI first, export them, then modify the JSON if needed. Trying to write n8n workflow JSON from scratch (even with AI assistance) often leads to syntax issues that take forever to debug.

System Architecture

The Five Services

Our Docker Compose stack orchestrates five services:

postgres: PostgreSQL 15 Alpine for job tracking and extracted data

db-migrator: Runs once on startup to apply SQL migrations

n8n: Workflow engine (v2.4.8) with 6 workflows orchestrating the pipeline

web: Nginx Alpine serving the upload interface

pgadmin: Optional database admin panel

Data Flow

The Philosophy: Setup Done Right

7 Commands. 2 Config Values.

I believe automation should respect your time. If you can't get from zero to working in under 10 minutes, something's wrong with the design.

Here's the complete setup:

git clone https://github.com/FarzamMohammadi/self-hosted-ai-stack.git

cd self-hosted-ai-stack/part-3-receipt-processing-with-vlm

cp .env.example .env

ollama pull qwen3-vl:8b-thinking-q8_0

# Edit .env: set OLLAMA_VLM_MODEL

docker-compose up -d

# Open http://localhost:5678 → Settings → n8n API → Create API Key

# Add N8N_API_KEY=<your-key> to .env

python scripts/setup-n8n.py

docker compose restart n8n

Done. The system is running:

Web Interface: http://localhost:8080

n8n Workflows: http://localhost:5678

pgAdmin: http://localhost:5050

What Happens Behind the Scenes

When you run docker-compose up, a carefully orchestrated sequence unfolds:

PostgreSQL starts and reports healthy (not just "running")

Migrations run—in dependency order, wrapped in transactions

n8n waits for migrations to succeed, not just complete

Web server starts only after n8n is ready

This isn't accidental. The compose file uses service_healthy and service_completed_successfully conditions—the strictest dependency types Docker offers. If migrations fail, n8n doesn't start. No silent failures. No corrupt state.

When you run setup-n8n.py, a second orchestration happens:

Validates your API key and database connection

Creates PostgreSQL credentials inside n8n

Uploads all 6 workflows from JSON files

Activates each workflow

The restart at the end? A workaround for a known n8n bug where webhooks created via API don't register until the container restarts.

The Small Things That Matter

Sensible defaults. Only 2 required config values. Database credentials, ports, timeouts—all have working defaults.

Helpful errors. When something fails, the error tells you what to do, not just what went wrong:

Invalid N8N_API_KEY

Go to Settings → n8n API in n8n UI to create a valid API key

Idempotent operations. Run the setup script twice? It skips what's already done. Migrations already applied? Tracked and skipped. Safe to re-run, safe to experiment.

I have a deep love for automation—there's something satisfying about simplifying processes until they just work. Every automated step is one less thing to remember, one less way to fail. Great developer experience isn't just for others; it's for future you at 2am, six months from now, debugging something you've completely forgotten.

Processing a Receipt Together

You have the repo cloned. You have the stack running. Let's use it.

Step 1: Upload a Receipt

Try it: Navigate to http://localhost:8080 in your browser. You'll see the upload interface with a drag-and-drop zone.

Need a test receipt? There are 4 included in test-receipts/. Or use any receipt photo from your phone.

Drag your receipt onto the zone (or click to browse). You'll see a progress bar, then a success message showing the filename and "Pending" status. Two buttons appear: "Upload Another" and "View All Receipts".

What n8n does behind the scenes:

Validates file type (JPEG, PNG, WEBP only) and size (max 10MB)

Generates a UUID for the receipt

Creates a date-based path:

/app/uploads/receipts/2026/02/02/uuid-filename.jpgSaves the file to disk (persisted to

volumes/uploads/on host)Creates a receipt record in PostgreSQL (status:

pending)Creates a processing job in the queue

Returns the job ID to the user

Key files:

web/index.html- The upload pageweb/js/upload.js- Handles drag-drop, validation, and API callsn8n/workflows/receipt-job-creation.json- Workflow 1: Receipt Upload & Job Creation

Did You Know: Why UUIDs + Date Paths?

UUIDs prevent collisions even in distributed systems—two users uploading

receipt.jpgat the same millisecond get unique filenames. Date-based paths (/2026/02/02/) make archival and cleanup trivial: delete everything older than 90 days with a simple directory operation. File hashing provides basic duplicate detection—the system can flag if you're uploading the same receipt twice.

Step 2: What Gets Stored

Here's what lands in the database when you upload:

-- receipts table (simplified)

id: UUID

filename: "sample-receipt.jpg"

file_path: "/app/uploads/receipts/2026/02/02/abc123-sample-receipt.jpg"

file_size: 245000

mime_type: "image/jpeg"

file_hash: "a1b2c3d4..."

processing_status: "pending"

items: NULL

created_at: NOW()

Key file: db/migrations/00001-receipts.sql

Did You Know: Why JSONB for AI Output?

AI models return unpredictable structures. What if a receipt has 3 items? Or 30? What if some have discounts and others don't? JSONB stores flexible JSON with full indexing support (GIN indexes). You can query into it (

WHERE items->>'item_name' LIKE '%coffee%'), index specific fields, and evolve the schema without migrations.

Step 3: View Your Receipts

Try it: Click "View All Receipts", or navigate directly to http://localhost:8080/receipts.html.

You'll see a table with columns: thumbnail, filename, upload date, status, items count, and confidence score. The receipt you just uploaded shows "Pending" status—the AI hasn't processed it yet.

Behind the scenes: The receipts page calls Workflow 4: Receipt List Provider (receipt-list-provider.json) to fetch receipts with filters and pagination.

Key files:

web/receipts.html- The management pageweb/js/receipts.js- List, filter, detail, export logicn8n/workflows/receipt-list-provider.json- Workflow 4: Receipt List Provider

Step 4: Watch the Processing

Try it: Find the "Auto-refresh (10s)" toggle in the top right and enable it. Or click "Refresh" manually every few seconds.

Within 30-60 seconds, watch the status change: Pending → Processing → Completed. Once complete, the "Items" column shows how many line items were extracted, and a confidence score appears.

What happens behind the scenes:

Every 30 seconds, Workflow 2: Receipt VLM Processing Queue Monitor polls the database for pending jobs and triggers the VLM processor for each one.

Why 30 seconds? It's a balance between latency and resource efficiency. For a small business processing a few receipts a day, this works well. For high volume, you can reduce it.

Key file: n8n/workflows/receipt-vlm-processing-queue-monitor.json - Workflow 2: Receipt VLM Processing Queue Monitor

Step 5: The AI Magic (VLM Processing)

When Workflow 2 finds your pending job, it triggers Workflow 3: Receipt VLM Processing & Item Extraction. This is where the AI reads your receipt.

What Workflow 3 does:

Fetches the receipt file from disk

Encodes the image to base64

Sends the image + extraction prompt to Ollama

Parses the JSON response

Updates the receipt record with extracted items

Updates the job status (completed or failed)

If failed, retries up to 3 times

Using cloud? Same workflow works—see Cloud Alternative.

Key file: n8n/workflows/receipt-vlm-processor.json - Workflow 3: Receipt VLM Processing & Item Extraction

Step 6: The Extraction Prompt

Here's the prompt that tells the VLM how to extract receipt data (v9):

Extract items AND totals from this receipt image as JSON.

Output format:

{

"currency": "USD",

"receipt_type": "grocery|restaurant|retail|service|unknown",

"tax_format": "added|inclusive|none",

"receipt_subtotal": 0.00,

"receipt_total_tax_amount": 0.00,

"receipt_total_tax_percentage": 0.00,

"receipt_total": 0.00,

"items": [

{

"item_name": "Product Name",

"item_quantity": 1,

"item_unit_price": 0.00,

"item_base_price": 0.00,

"item_discount_amount": null,

"item_tax_price": null,

"item_total_price": 0.00,

"item_sequence": 1,

"confidence_score": 0.95,

...

}

]

}

Rules:

TAX HANDLING - Identify the tax format first:

1. TAX ADDED (US/Canada style):

- Look for separate "Subtotal", "Tax/Sales Tax", and "Total" lines

- Total = Subtotal + Tax

- tax_format = "added"

2. TAX INCLUSIVE (EU/Swiss style):

- Look for "Total" with "Incl. X% MwSt/VAT/TVA: amount"

- Tax is already part of the total, not added on top

- tax_format = "inclusive"

- receipt_subtotal = receipt_total - receipt_total_tax_amount

3. NO TAX SHOWN:

- tax_format = "none", set tax fields to null

OTHER RULES:

- Remove quantity prefixes (1x, 2x) from item_name, put in item_quantity

- item_total_price = item_base_price - item_discount_amount (when discount exists)

- Math: item_quantity × item_unit_price = item_base_price

- Return JSON only

Key file: prompts/ocr/v9-system-prompt.md

67 lines. Clear output schema. Essential rules only. This simplicity is intentional—and hard-won. More on that below.

Did You Know: The LLM Dictionary and Tokenization

LLMs don't see characters—they see tokens. Each model has a "vocabulary" (dictionary) of ~32,000-100,000 tokens. Common words like "the" are single tokens, while rare words get split ("tokenization" might become "token" + "ization").

Why does this matter for prompts? The model processes tokens, not text. A well-structured JSON schema is unambiguous—the model knows exactly what format you expect. Generic placeholders like

0.00or"Product Name"are familiar patterns from training data. This is why clear schemas work better than verbose explanations—they're precise, not just shorter.

Step 7: Results Stored in Database

Once processing completes, here's what gets saved:

-- Updated receipt record

processing_status: "completed"

items: [

{

"item_name": "Front and rear brake cables",

"item_quantity": 1,

"item_unit_price": 100.00,

"item_total_price": 100.00,

"confidence_score": 0.95

},

{

"item_name": "New set of pedal arms",

"item_quantity": 2,

"item_unit_price": 15.00,

"item_total_price": 30.00,

"confidence_score": 0.95

},

...

]

items_count: 3

total_confidence_score: 0.95

receipt_subtotal: 145.00

receipt_total_tax_amount: 9.06

receipt_total: 154.06

The JSONB column holds all extracted items with their confidence scores, while computed fields (items_count, total_confidence_score) enable fast queries without re-parsing the JSON.

Step 8: View the Extracted Data

Try it: On the receipts page (http://localhost:8080/receipts.html), click any completed receipt row to open the detail modal.

What you'll see:

Left side: The original receipt image (scroll to zoom)

Right side: Extracted metadata (filename, date, size, status, currency, receipt type) and a table of extracted items

Each item shows: name, quantity, unit price, base price, discount (if any), tax, total price, and a confidence score.

Behind the scenes: Workflow 5: Receipt Detail Provider (receipt-detail-provider.json) fetches the receipt record with the items array.

Did You Know: What Confidence Scores Actually Mean

Confidence isn't probability of correctness. It's the model's internal certainty about its output—how "surprised" it would be if wrong. A 0.95 confidence means the model is very sure about its answer. But high confidence + wrong answer = hallucination territory. That's why we use confidence thresholds:

90-100%: Auto-approve for accounting

70-89%: Quick manual review

50-69%: Detailed review required

Below 50%: Manual entry likely faster

Step 9: Export Your Data

Try it: Click the "Export CSV" button in the header to download all completed receipts.

The CSV includes: receipt ID, filename, upload date, currency, receipt type, subtotal, tax, total, and all extracted line items flattened into columns.

What Workflows 4-6 do:

Workflow 4: Receipt List Provider (

receipt-list-provider.json): List receipts with filters and paginationWorkflow 5: Receipt Detail Provider (

receipt-detail-provider.json): Get receipt detail with items arrayWorkflow 6: Receipt Export Generator (

receipt-export-generator.json): Export completed receipts to CSV

Key files:

web/receipts.html- The management pageweb/js/receipts.js- List, filter, detail, export logicn8n/workflows/receipt-list-provider.jsonn8n/workflows/receipt-detail-provider.jsonn8n/workflows/receipt-export-generator.json

The Art of Simplicity: A Prompt Optimization Journey

When More Is Less

This is the lesson that humbled me most during this project.

The receipt processing system needed to handle diverse formats—different currencies (USD, CHF, GBP), quantity notations (2x, x3, QTY columns), discounts, and tax calculations. My original prompt was a 400+ line behemoth, complete with:

Detailed parsing approaches for every scenario

Five examples with step-by-step breakdowns

Mathematical validation checklists

Multiple tax handling patterns

Exhaustive field documentation

On paper, it looked thorough. Professional, even. I was proud of it.

In practice? Maybe 60-70% accuracy. Items would be missed, quantities misinterpreted, discounts incorrectly calculated. The model seemed to be... overthinking.

The Iterative Discovery

I set up a systematic testing pipeline with four diverse receipt samples:

A USD receipt with items and tax at bottom

A Swiss CHF receipt with quantity prefixes (2x Latte Macchiato) and European formatting

A US repair invoice with QTY columns and tabular data

A UK receipt with line-level discounts and manager overrides

What followed was an exercise in humility. After multiple iterations—tweaking parameters, adjusting examples, refining instructions—I had a revelation: What if the problem isn't that I'm not explaining enough, but that I'm explaining too much?

I stripped the prompt down. Out went the five detailed examples. Out went the philosophical explanations about tax patterns. Out went the validation checklists. What remained:

Extract items from this receipt image as JSON.

Output format:

{

"currency": "USD",

"has_total_tax_only": true,

"items": [

{

"item_name": "Product Name",

"item_quantity": 1,

"item_unit_price": 5.00,

"item_base_price": 5.00,

"item_discount_amount": null,

"item_total_price": 5.00,

...

}

]

}

Rules:

- has_total_tax_only=true unless tax is shown on EACH item line separately

- Remove quantity prefixes (1x, 2x) from item_name, put in item_quantity

- item_total_price = item_base_price - item_discount_amount (when discount exists)

- Math: item_quantity × item_unit_price = item_base_price

- Return JSON only

About 30 lines instead of 400+.

The Results

I ran stability tests—five times per receipt with varying random seeds:

| Receipt | Runs | Result |

| Simple USD receipt | 5/5 | All identical |

| Swiss CHF with quantities | 5/5 | All identical |

| US invoice with QTY column | 5/5 | All identical |

| UK receipt with discounts | 5/5 | All identical |

20 out of 20 runs passed. Every quantity correct. Every discount captured. Every currency identified. The math checked out perfectly.

I stared at the results, equal parts vindicated and embarrassed. All those hours crafting the perfect 400-line prompt, and the model just needed me to get out of its way.

The Lesson

The model already knew how to read receipts. It had seen millions of them during training. My elaborate prompt wasn't teaching it anything—it was confusing it.

When I bombarded it with examples, edge cases, and detailed instructions, the model tried to reconcile all that information with every image it saw. It started second-guessing obvious interpretations. It looked for complexities that weren't there.

The simple prompt worked because it:

Clearly defined the output format - Show, don't tell

Stated only essential rules - Five rules instead of fifty paragraphs

Trusted the model's inherent capabilities - It knows what a receipt looks like

Avoided overthinking triggers - No examples that might not match the current image

Broader Implications

This principle extends beyond receipt processing:

Prompt engineering is often about subtraction, not addition

Clear output schemas beat extensive explanations

Trust the model's training—it's seen more examples than you can write

Test systematically with diverse inputs to validate changes

As Antoine de Saint-Exupéry wrote: "Perfection is achieved, not when there is nothing more to add, but when there is nothing left to take away."

The journey from 400 lines to 30 was a reminder that in AI engineering, simplicity isn't just elegant—it's effective.

Testing Methodology: Verify Before You Deploy

The Trap: Teaching to the Test

After discovering the power of simplicity, I needed to extend the prompt to capture receipt-level totals (subtotal, tax, total)—not just individual items. Here's how I approached it.

My v7 prompt had a has_total_tax_only boolean flag but no fields to capture the actual tax amount. When processing a US invoice with:

Subtotal: $145.00

Sales Tax 6.25%: $9.06

Total: $154.06

The system would extract items correctly but lose the tax information entirely.

My first instinct was to add example values to the prompt:

"receipt_subtotal": 145.00,

"receipt_total_tax_amount": 9.06,

"receipt_total_tax_percentage": 6.25,

"receipt_total": 154.06

This "worked"—but I was essentially giving the model the answers. When testing on the same receipt that matched my example values, of course it succeeded. Training data that matches test data tells you nothing about real-world performance.

I've made this mistake before. You'd think I'd learn.

The Fix: Generic Placeholders

I revised to use zeros as placeholders, forcing the model to read the receipt:

"receipt_subtotal": 0.00,

"receipt_total_tax_amount": 0.00,

"receipt_total_tax_percentage": 0.00,

"receipt_total": 0.00

The Testing Protocol

Rather than running one test and calling it done, I ran 10 tests with different random seeds on the same receipt:

for seed in 43 44 45 46 47 48 49 50 51 52; do

curl -s http://localhost:11434/api/generate \

-d '{"model":"qwen3-vl:8b-thinking-q8_0", "seed":'$seed', ...}' \

| jq '{tax:.receipt_total_tax_amount, pct:.receipt_total_tax_percentage}'

done

US Invoice Results (Sample 3):

| Test | Subtotal | Tax | Tax % | Total | Items |

| 1 | 145.00 | 9.06 | 6.25 | 154.06 | 3 |

| 2 | 145.00 | 9.06 | 6.25 | 154.06 | 3 |

| ... | ... | ... | ... | ... | ... |

| 10 | 145.00 | 9.06 | 6.25 | 154.06 | 3 |

10/10 PASSED - Consistent extraction across all seeds.

Testing Across Receipt Types

One receipt type isn't enough. I tested against different formats:

Swiss Receipt (European inclusive tax):

Format: "Incl. 7.6% MwSt 54.50 CHF: 3.85"

Result: 1/5 passed—the prompt struggled with tax-inclusive formats

This revealed a gap: the v8 prompt handled US-style "tax added" receipts perfectly but needed refinement for European "tax included" formats. This led directly to the v9 prompt with explicit multi-region tax handling.

Key Takeaways

Never use real values as examples - Use generic placeholders (0.00, "Product Name") to test actual extraction ability

Run multiple seeds - A single successful test means nothing. Run 5-10 tests with different random seeds to verify consistency

Test diverse inputs - Different receipt formats (US tax-added, EU tax-inclusive, no-tax) expose edge cases

Document failures - A 1/5 pass rate tells you exactly where to focus next

Iterate systematically - Don't guess. Test, measure, refine, repeat

This transforms prompt engineering from art to science. You stop hoping your prompt works and start knowing when and how it fails.

When Things Go Wrong: Built-in Safeguards

Document processing hits edge cases. The system handles them:

AI Response Failures:

Automatic retry with up to 3 attempts

Failed jobs marked for manual review

Error logging for troubleshooting

Image Quality Issues:

Pre-validation catches unsupported file types

Confidence scores below thresholds flag items for review

User gets clear feedback on what went wrong

Processing Timeouts:

60-second timeout prevents hung processes

Failed jobs remain in queue for retry

Queue monitor continues processing other jobs

Image Quality Tips

For best results:

Resolution: 300-600 DPI ideal, 150 DPI minimum

Size: 1024px max width reduces processing time

Orientation: Right-side up (rotation affects accuracy)

Lighting: Good contrast between text and background

Format: JPG or PNG preferred

If you can't read it clearly, neither can the AI. Flatten creased receipts, use good lighting, fill the frame.

Did You Know: Image Preprocessing

Poor-quality images can be improved before sending to the VLM. Techniques like contrast enhancement (CLAHE), deskewing, sharpening, and binarization can make faded or noisy receipts more readable. Tools like OpenCV or PIL can automate this. We didn't implement preprocessing here, but it's worth exploring for consistently low-quality scans.

Common Issues

| Problem | Cause | Fix |

| VLM processing hangs or fails | Ollama not running | Run ollama serve or check curl http://localhost:11434/api/tags |

| Webhooks return 404 | Workflows not imported | Run python scripts/setup-n8n.py |

| Webhooks still 404 after import | Workflows not activated | Open n8n UI, activate each workflow, or restart: docker compose restart n8n |

What You've Built

This isn't just a receipt processor. It's a demonstration of what's possible when you combine:

Accessible AI—A 10GB model that runs on your laptop, not a cloud bill

Thoughtful automation—7 commands to deploy, 2 values to configure

Hard-won simplicity—A 67-line prompt that took 400+ lines to discover wasn't needed

Engineering care—Health checks, validation chains, helpful errors

The real achievement isn't the features. It's that it works—reliably, on modest hardware, without fighting you.

The Lessons

On prompts: Simplicity beats complexity. Trust the model's training.

On setup: Great developer experience starts with the little details. Automate what can be automated. Simplify until it just works.

On building: The best code is often the code you deleted.

Coming Up in Part 4

We'll take n8n to the next level with agent orchestration—building workflows that don't just process data, but make decisions and use tools:

AI agents that can call functions (calculators, web search, database queries)

Multi-step reasoning workflows

Agentic behavior patterns in n8n

The vision: Workflows that think, not just execute.

Resources

n8n Documentation - Workflow automation platform

Ollama - Local LLM hosting

qwen3-vl on Ollama - Vision Language Model

PostgreSQL JSONB - JSON operations

GitHub Repository - Full source code

Cloud Alternative: When Local Isn't an Option

If you have less than 16GB RAM or no dedicated GPU, use Ollama's cloud models instead. Same API, same workflow—just a different model name.

Setup:

# Update to Ollama v0.12+

ollama --version

# Download latest from https://ollama.com/download if needed

# Sign in

ollama signin

# Update .env

OLLAMA_VLM_MODEL=qwen3-vl:235b-cloud

Available vision models: qwen3-vl:235b-cloud (recommended), deepseek-v3.1:671b-cloud

Cost: Ollama Turbo is $20/month. Ollama states they don't retain your data.

Other providers: You can modify the n8n workflow to use OpenAI or other APIs by changing VLM_API_URL, adding auth headers, and adjusting the request format in receipt-vlm-processor.json.

This is Part 3 of the "Complete Self-Hosted AI Infrastructure" series. We're building increasingly sophisticated AI capabilities, all running locally on your machine. Thanks for joining me on this journey.